Having worked closely with Medical Affairs and MSL teams across hundreds of scientific congresses — from focused specialty meetings to the largest global conferences — we’ve seen firsthand how difficult it can be to extract meaningful insights from the flood of new data presented at these events.

One pattern has become increasingly clear.

The volume of scientific information presented at congresses is growing faster than any team can realistically process manually. Major meetings now generate thousands of abstracts across dozens of therapeutic areas. Even well-staffed Medical Affairs teams can spend days reviewing the material, trying to identify what actually matters.

This is one reason artificial intelligence has started to appear in the daily workflow of Medical Science Liaisons.

But the assumption that AI automatically produces better intelligence is wrong.

In our experience, the difference between teams that benefit from AI and those that simply generate more noise comes down to something simple: how the technology is used.

When AI is applied casually, it produces summaries that may be technically accurate but strategically meaningless.

When it is applied deliberately, it can help Medical Affairs teams analyze scientific information faster while still relying on the clinical judgment and experience that MSLs bring to the process.

Not All AI Tools Are Appropriate for Medical Affairs

One of the first decisions teams face is which AI systems they can trust.

What most teams underestimate is how critical this decision actually is.

Not all AI models are designed for regulated environments. Public tools may seem convenient, but they introduce real risks around data privacy, intellectual property, and lack of transparency in training sources.

Uploading sensitive scientific or competitive information into unsecured systems is not just inefficient.

It’s risky.

The teams that get this right treat AI infrastructure as a strategic decision — not a convenience. Secure, domain-specific models are not optional in Medical Affairs.

In practice, organizations that successfully adopt AI rely on enterprise-grade platforms designed specifically for scientific workflows.

These systems are:

- trained on biomedical data

- capable of handling complex clinical terminology

- transparent in how data is processed and protected

For Medical Affairs teams, those safeguards are foundational.

The Real Skill Is Not AI — It’s Asking Better Questions

Many people assume AI tools automatically understand what users want.

They do not.

The biggest mistake we see is when users provide vague instructions and expect meaningful insights in return. When the prompt is unclear, the output usually reflects that lack of clarity.

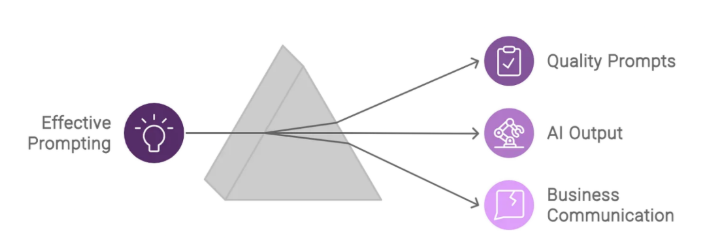

This is where prompt engineering becomes important.

Despite the technical sounding name, prompt engineering is simply the discipline of communicating clearly with the system. The structure of the question determines the usefulness of the answer.

In our experience, MSL teams often discover that small improvements in how they phrase prompts dramatically improve the quality of AI-generated summaries.

For example, asking an AI model to summarize an abstract about heart disease will usually produce a broad overview. But asking the model to identify key efficacy findings, safety signals, and potential clinical implications produces a far more useful output.

Clarity produces relevance.

Source: medium.com

Context Is What Makes AI Useful

Another critical factor in AI-assisted analysis is context.

AI systems interpret information based on the instructions and background they receive. When that context is missing, the summaries they generate often lack the nuance required for meaningful Medical Affairs discussions.

In practice, this means providing the model with details about the therapeutic area, patient population, and clinical questions that matter most to your organization.

For example, a prompt that simply asks for a summary of a Type 2 diabetes abstract will usually produce a generic description of the study. But when the request specifies treatment guidelines, recent clinical trials, adherence challenges, or specific patient populations, the resulting output becomes far more relevant.

What most teams discover is that AI performs best when it is treated almost like a junior analyst — capable of processing large amounts of information, but dependent on clear direction.

Practical Prompts That Help MSLs Work Faster

Once teams understand how to structure prompts effectively, AI becomes significantly more useful for day-to-day Medical Affairs work.

The prompts that consistently produce the most reliable results typically define these three elements clearly:

- the analytical task

- the scientific context

- the format of the expected output

When those elements are present, AI tools can help MSLs process congress abstracts quickly while still preserving scientific rigor.

Below are several prompt structures that consistently produce useful results when analyzing congress abstracts.

| Objective | Example Prompt |

| Structure a comprehensive summary | Summarize the following congress abstract for a Medical Affairs team. Focus on background, methods, key findings, and conclusions while highlighting clinical significance. |

| Analyze the competitive landscape | Based on the findings in this abstract, summarize the competitive advantages and potential limitations of the therapies discussed within our therapeutic area. |

| Compare multiple abstracts | Compare the results of the following two abstracts on [disease state]. Focus on differences in study design, patient populations, endpoints, and overall conclusions. |

| Generate follow-up scientific questions | Generate five scientific exchange questions an MSL could ask a Key Opinion Leader based on the findings and limitations of this abstract. |

| Translate findings into field insights | Transform the results of this abstract into actionable insights for an MSL, including potential discussion points for KOL interactions and implications for future research. |

These prompt structures move AI beyond simple summarization toward something more valuable: structured scientific analysis.

Moving From Abstract Summaries to Strategic Insights

Summarizing abstracts is only the starting point.

The real value of AI appears when it helps Medical Affairs teams move beyond summarization and toward interpretation.

In our experience, the most useful applications involve comparing multiple abstracts, identifying emerging scientific themes, or detecting early signals about shifts in treatment approaches.

AI tools can highlight differences in study design, patient populations, endpoints, or safety signals across multiple studies. Performing this type of comparison manually can require hours of analysis.

However, human expertise remains essential.

AI can detect patterns, but it cannot determine which patterns actually matter for clinical strategy. That interpretation still belongs to experienced MSLs who understand the broader therapeutic landscape.

Adapting AI Summaries for Different Audiences

Another powerful capability of AI tools is the ability to tailor scientific information for different audiences.

Not every stakeholder requires the same level of detail. Medical Affairs teams may want structured scientific summaries, while leadership teams often need concise explanations of strategic implications. Patient advocacy groups may require plain-language explanations that focus on patient impact.

AI can generate these variations quickly when the intended audience is clearly specified in the prompt.

| Audience | Key Prompt Words |

| Medical Affairs team | summarize, abstract, key findings, clinical significance, background, methods, conclusions, implications |

| Non-expert audience (patient advocacy groups) | plain language summary, easy to understand, patient impact, key takeaways |

| Leadership / executive audience | executive summary, concise, strategic implications, competitive landscape |

| Physicians / clinicians | clinical practice implications, treatment decisions, impact on patient care, standard of care |

When the audience is defined clearly, AI models adjust tone, terminology, and depth automatically. This allows Medical Affairs teams to generate multiple versions of the same scientific content while maintaining consistency across communications.

AI Is Expanding the Analytical Capabilities of MSL Teams

The volume of scientific data presented at congresses will only continue to grow.

AI tools are already helping MSLs process information faster, identify patterns across large datasets, and translate complex findings into insights that leadership teams can act on.

But technology alone will not solve the problem.

The biggest difference we see between average teams and top-performing ones is this: the best teams don’t rely on AI to think for them — they use it to think faster and more clearly.

They:

- define clear prompting strategies

- provide structured context

- combine AI outputs with scientific expertise

When that balance is achieved, AI becomes more than a summarization tool. It becomes an analytical advantage.

Why AI Works Best Within a Larger System

As congress data continues to grow in scale, managing abstracts alongside presentations, KOL discussions, and internal notes becomes increasingly difficult.

Over the years, we have seen many Medical Affairs teams struggle with information scattered across emails, slide decks, spreadsheets, and personal notes. When insights are fragmented this way, identifying patterns across meetings becomes far more difficult.

That experience ultimately led us to develop the inVision platform.

Rather than treating abstracts, presentations, and field insights as separate pieces of information, the platform was designed to analyze them together. By combining congress abstracts, notes, images, and scientific discussions in a single environment, AI tools can help detect patterns that might otherwise remain hidden.

The goal was not simply faster summarization. It was better scientific intelligence.

For Medical Affairs teams looking to bring congress abstracts, notes, and field insights together in one place, inVision was built specifically for this challenge.

Our platform helps teams organize congress intelligence, analyze scientific content with secure AI tools, and turn large volumes of data into structured insights.

If you’re exploring how AI can support your Medical Affairs workflows, feel free to reach out, we’d be happy to show you how inVision works in practice.